This post was originally published in 2016 as part of the Edgar Learn series, where we shared the strategies that helped us find success. Although a lot has changed since then, it seems this MailChimp hack for A/B testing we discovered back in 2016 can still work today, so we decided to keep it online for you to try.

We absolutely adore MailChimp over here at MeetEdgar. It is without a doubt one of the most important tools for our business.

But when we started really digging into MailChimp A/B testing and sending MailChimp test emails, we ran into a little problem.

MailChimp doesn’t allow for A/B testing of automated campaigns and that is STILL true in 2021. They only allow multivariate testing on single emails.

It makes sense – the cardinal rule of A/B testing is that you only want to change one variable at a time, and the very nature of automated campaigns (sending several emails in a row, automatically) makes that very difficult to do.

However, where there is a will, there is a way, and we discovered a little trick you might want to add to your arsenal of MailChimp hacks.

How to hack MailChimp A/B testing

For example, if you had a series of three emails, and you wanted to test changes to all three, you needed to set up a test that looked something like this:

| Email 1 | Email 2 | Email 3 |

| Control | Control | Control |

| Test | Control | Control |

| Test | Test | Control |

| Test | Test | Test |

| Control | Test | Test |

| Control | Control | Test |

| Control | Test | Control |

| Control | Test | Test |

| Test | Control | Test |

Where “Control” was the standard version of the email, and “Test” was the email.

That’s nine different variations, which means we’d be splitting traffic nine different ways, which means it would take a while to get a meaningful sample size. And since each one of these series already took several days to complete, we were looking at a very long time to run each test properly.

So we decided to fudge things a little bit, and to make our tests simply look like this:

| Email 1 | Email 2 | Email 3 |

| Control | Control | Control |

| Test | Test | Test |

Please don’t call the Testing Police on us! We know it’s not the most scientific way for A/B testing MailChimp.

Our justification, other than general simplicity and speed of testing, was that we were also looking for big changes to our conversion rates. When you’re looking for a BIG change, it becomes a little less important to isolate exactly what element you’re changing in the test.

How We Tricked MailChimp Into Running These Tests

Because MailChimp wasn’t set up to allow us to run tests at all on automated emails, we had to come up with a workaround. So here’s what we did…

Our testing has focused primarily on our invite series.

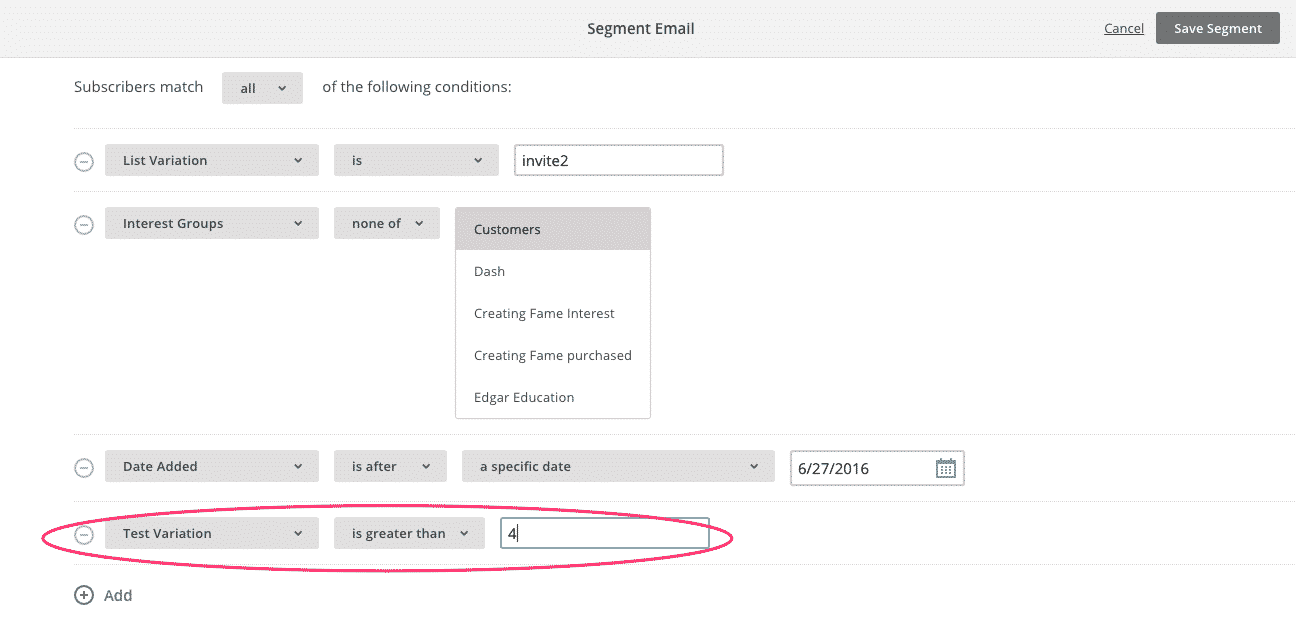

When you visited MeetEdgar.com, you were invited to sign up for an invitation to use Edgar. When you signed up for an invite, you were assigned a number (the Test Variation). This was done through a little behind-the-scenes magic our WordPress expert had developed.

When we created the segments for the test campaigns in MailChimp, we used this Test Variation number to split you into either a test group or the control group. Then we sent you the appropriate drip campaign.

To reference our examples above once more, if we were running a by-the-books scientific study, we would have needed nine such groups to test the nine different variations of our testing flow. But in our simplified version, we only needed two Test Variations to get the results we want.

So we could have just assigned everyone either a one or a two. But that’s not what Hackerman would do!

Instead, we assigned everyone a number between one and six. This helped ensure a more even (and random) split between groups and allowed for three-way split testing as well if we’re feeling particularly… testy.

Ones, twos, and threes went into our control group and received the same emails we usually sent. Fours, fives, and sixes went into the experimental group and received our all-new flow.

Here’s what MailChimp’s Segment tool looks like:

Note that you only need to do this for the first email in the automated series! All of the other emails are connected to this email, making it a little easier to split up your flows for testing. Thanks, MailChimp!

How We Measured Success

The trickiest part of our hacked-together testing process was measuring the results.

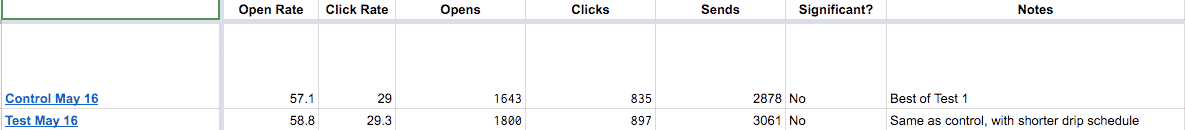

We were looking at two main things: Open Rate and Click Rate.

Open Rate is how many people open each email, and Click Rate is how many people click on a link within each email. These are pretty obviously vital stats, right?

The reason it was tricky to measure success was because we needed concrete numbers to work with, but MailChimp gives these numbers as percentages. So we built a spreadsheet that took the percentage rates and the total number of emails sent, and gave back actual numbers for clicks and opens. It looked like this:

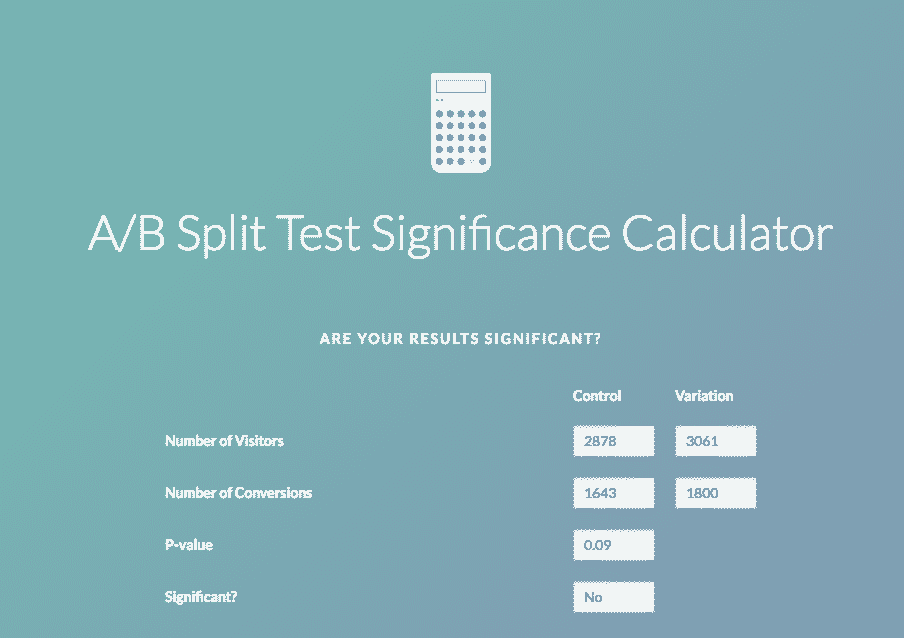

So why did we need the actual number of opens and clicks? Because we then took those numbers and fed them into VWO’s A/B Split Test Significance Calculator to see if our results were statistically significant.

Here’s what that looked like:

Without getting to mathtastic, this calculator basically figures out if your sample sizes are big enough to show a real change. In the above example, they were not – which is why our “Significant?” column in the spreadsheet also shows a “No”

We keep running a test and hand-feeding the numbers into the spreadsheet and calculator until it shows significance, or we decide that it’s gone on long enough to show that there’s no real impact from the change. And sometimes, that can be a pretty valuable lesson as well!

Our Biggest Lesson So Far

We’ve gleaned a lot of useful information from our hacked-together testing, including some classic MailChimp subject line testing and changing around the content of specific emails. But in the overall scheme of things, our most valuable learnings so far have come from a test that showed no significant change in performance – that very same test we’ve been using as an example in the above images.

In this test, the only thing that was different between our two campaigns was the amount of time between each email. In our control, we had three emails spread out over roughly the course of a month. In our test, we condensed that schedule down to 10 days.

And guess what? The results were pretty much the same.

Essentially, we learned that we could reduce the timeframe of our intro email campaign to roughly one-third of its original length with no negative effects. So of course that became our new standard! Not helped this bring in new customers more quickly, but it also made our future tests run more quickly as well. And that’s the sort of thing that pays dividends well down the road.

Want to have some fun with MailChimp as well? Then give this automated campaign testing trick a shot!

Have you tried testing automated campaigns in MailChimp?

If you read this post back in 2016 and gave our testing tips a go we’d love to hear how you got on. Did you discover something useful, fun, interesting?

If you’re trying this little hack out for the first time now, let us know your feedback in the comments! We love learning from your experiments.

6 Comments

Great post. Shouldn’t the segment say “greater than OR EQUAL TO” 4 in order to get an even split?

We are using a slightly easier method than generating random numbers with Hackerman code. We just divide our list in to two test groups segments based on first name initial. A-J and K-Z are close to 50/50%. (Not truly random, but good enough) The segments auto populate and the automation is replicated and assigned a segment to split test.

Great idea! Using names can work well, but for some lists it can result in very lopsided testing. When in doubt, create a segment and preview the results before sending.

Ok so how can we assign the variation number ourselves? Makes much more sense to do this.

From our very own Hackerman: Essentially, the test variation is a standard numeric merge field in MC. You can assign numbers to it manually, but if you kick off automations right away, like we do, you’ll want to auto-generate the numbers in your website code and pass them to MC that way.

Nice post and a great idea. It’s be lovely to have an initial pointer/bit more info on HOW to “auto-generate the numbers in [our] website code”?!

Also, MailChimp does give you the specific open and click rates (not just percentages), both for the workflow in total and on a per email basis; head to “Reports” in your dashboard, then change from “Campaigns” to the third option, “Automation” and bosh! (click the specific workflow to get single-email breakdown stats)